These weird, unsettling photos show that AI is getting smarter

Of all the AI models in the world, OpenAI's GPT-3 has most captured the public's imagination. It can spew poems, short stories, and songs with little prompting, and has been demonstrated to fool people into thinking its outputs were written by a human. But its eloquence is more of a parlor trick, not to be confused with real intelligence.

Nonetheless, researchers believe that the techniques used to create GPT-3 could contain the secret to more advanced AI. GPT-3 trained on an enormous amount of text data. What if the same methods were trained on both text and images?

Now new research from the Allen Institute for Artificial Intelligence, AI2, has taken this idea to the next level. The researchers have developed a new text-and-image model, otherwise known as a visual-language model, that can generate images given a caption. The images look unsettling and freakish-nothing like the hyperrealistic deepfakes generated by GANs-but they might demonstrate a promising new direction for achieving more generalizable intelligence, and perhaps smarter robots as well.

Fill in the blankGPT-3 is part of a group of models known as transformers," which first grew popular with the success of Google's BERT. Before BERT, language models were pretty bad. They had enough predictive power to be useful for applications like autocomplete, but not enough to generate a long sentence that followed grammar rules and common sense.

BERT changed that by introducing a new technique called masking." It involves hiding different words in a sentence and asking the model to fill in the blank. For example:

- The woman went to the ___ to work out.

- They bought a ___ of bread to make sandwiches.

The idea is that if the model is forced to do these exercises, often millions of times, it begins to discover patterns in how words are assembled into sentences and sentences into paragraphs. As a result, it can better generate as well as interpret text, getting it closer to understanding the meaning of language. (Google now uses BERT to serve up more relevant search results in its search engine.) After masking proved highly effective, researchers sought to apply it to visual-language models by hiding words in captions, like so:

A ____ stands on a dirt ground near a tree.AI2

A ____ stands on a dirt ground near a tree.AI2This time the model could look at both the surrounding words and the content of the image to fill in the blank. Through millions of repetitions, it could then discover not just the patterns among the words but also the relationships between the words and the elements in each image.

The result is models that are able to relate text descriptions to visual references-just as babies can make connections between the words they learn and the things they see. The models can look at the photo below, for example, and write a sensible caption like Women playing field hockey." Or they can answer questions about it like What is the color of the ball?" by connecting the word ball" with the circular object in the image.

A visual-language model would be able to sensibly caption this photo: Women playing field hockey."JOHN TORCASIO / UNSPLASHA picture is worth a thousand words

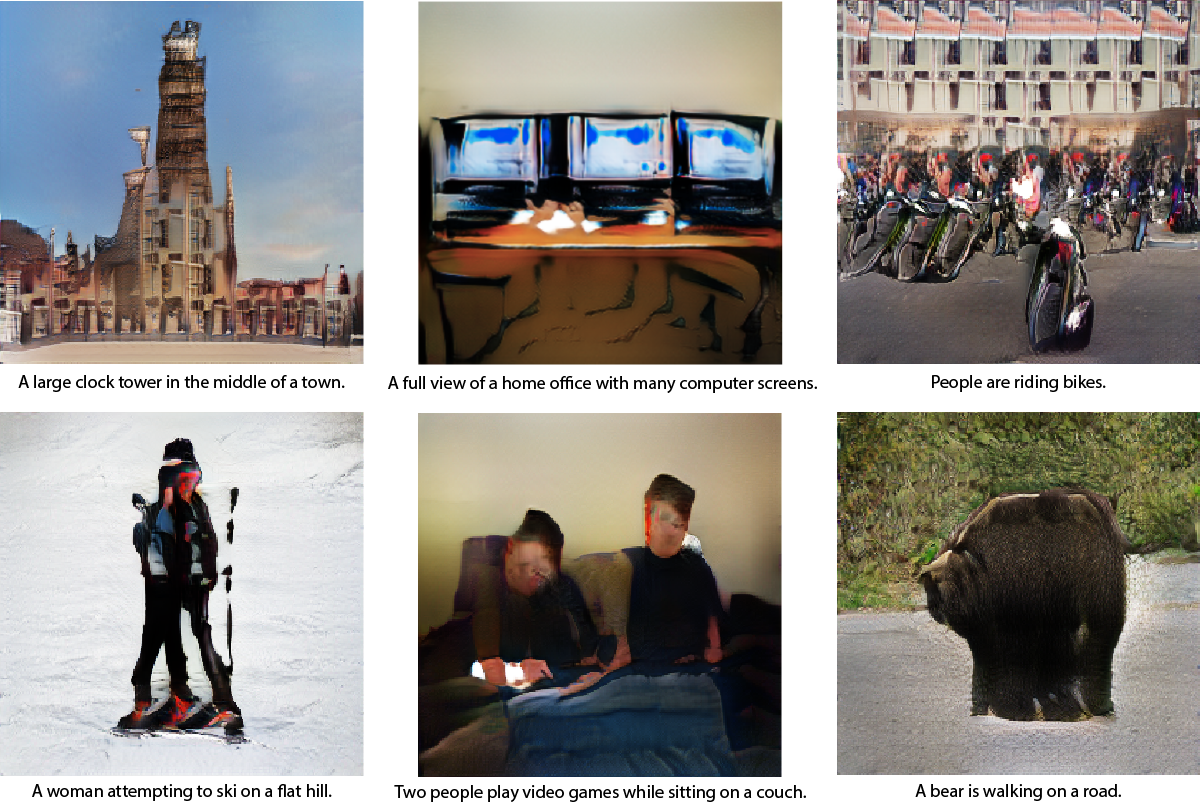

A visual-language model would be able to sensibly caption this photo: Women playing field hockey."JOHN TORCASIO / UNSPLASHA picture is worth a thousand wordsBut the AI2 researchers wanted to know whether these models had actually developed a conceptual understanding of the visual world. A child who has learned the word for an object can not only conjure the word to identify the object but also draw the object when prompted with the word, even if the object itself is not present. So the researchers asked the models to do the same: to generate images from captions. All of them spit out nonsensical pixel patterns instead.

It's a bird! It's a plane! No, it's just gobbledygook generated by AI.AI2

It's a bird! It's a plane! No, it's just gobbledygook generated by AI.AI2It makes sense: transforming text to images is far harder than the other way around. A caption doesn't specify everything contained in an image, says Ani Kembhavi, who leads the computer vision team at AI2. So a model needs to draw upon a lot of common sense about the world to fill in the details.

If it is asked to draw a giraffe walking on a road," for example, it needs to also infer that the road is more likely to be gray than hot pink and more likely to be next to a field of grass than next to the ocean-though none of this information is made explicit.

So Kembhavi and his colleagues Jaemin Cho, Jiasen Lu, and Hannaneh Hajishirzi decided to see if they could teach a model all this implicit visual knowledge by tweaking their approach to masking. Rather than train the model just to predict masked words in the captions from the corresponding photos, they also trained it to predict masked pixels in the photos on the basis of their corresponding captions.

The final images generated by the model aren't exactly realistic. But that isn't the point. They contain the right high-level visual concepts-the AI equivalent of a child drawing a stick figure to represent a human. (You can try out the model for yourself here.)

Examples of images generated by AI2's model from the captions below them.AI2

Examples of images generated by AI2's model from the captions below them.AI2The ability of visual-language models to do this kind of an image generation represents an important step forward in AI research. It suggests the model is actually capable of a certain level of abstraction, a fundamental skill for understanding the world.

In the long term, this could have implications for robotics. The better a robot is at understanding its visual surroundings and using language to communicate about them, the more complex the tasks it will be able to carry out. In the short term, this type of visualization could also help researchers better understand exactly what black box" AI models are learning, says Hajishirzi.

Moving forward, the team plans to experiment more to improve the quality of the image generation and expand the model's visual and linguistic vocabulary to include more topics, objects, and adjectives.

Image generation has really been a missing puzzle piece," says Lu. By enabling this, we can make the model learn better representations to represent the world."