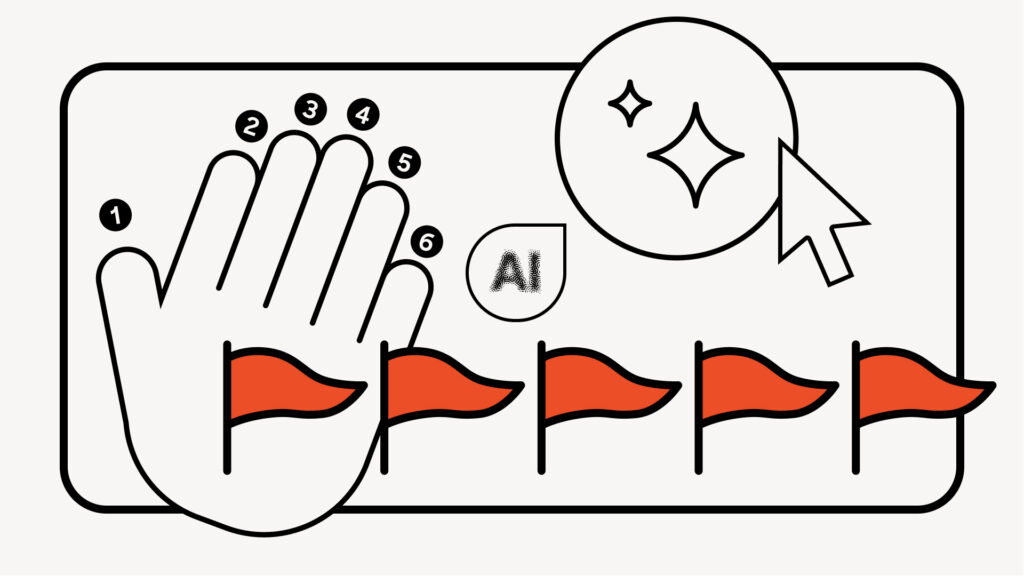

Misinformation in the age of AI: It’s in the details (like extra fingers)

As you scroll through social media, the posts blend together: a heroic cat, a shocking statistic, a breaking news clip. But in a world where AI blurs the line between fact and fiction, how do you tell what's real from what's misinformation?

The short answer: Most of the time, you can't. Generative AI tools have made it faster, cheaper, and easier to create convincing fakes, flooding our feeds with AI-generated content. But here's what you can do - learn to spot the signs of misinformation.

What should I think about when trying to detect AI?Just looking out for obvious AI will mean missing a lot of it. Retrain your brain to assess social media on a framework similar to the ones used by AI-misinformation researchers. Consider who's behind the post, whether the content makes sense, how it's written, the emotions it evokes and any signs of manipulation.

User- Who is posting this? Is it a reliable source? Is this account tied to a real-world institution that I trust?

- What is the username length? Is it a long set of random numbers and letters? Is it a verified account? Does it only have a handful of followers (who also look random or not real)?

- Does the framing make sense? What is this content about? Is it especially vague or seems so outrageous that it couldn't be true? Does this contradict what you already know about the topic?

- Are there platform flags that the content could be potentially misleading, or a comment section full of claims that it's false? Are there AI badges or indicator hashtags such as #AI, #satire, #skit, #spoof or #AD?

- How is it written? Is there poor or wooden-sounding grammar? Is it flowery? Is there unnatural repetition, or has the user posted the same thing several times?

- Does it repeat often-used AI words such as elevate," captivate," tapestry" or delve"? Does it use known AI phrases such as provided valuable insights," an indelible mark," or a rich tapestry"? (Of course, these words and phrases don't definitively mean that the content is AI-generated misinformation; they're just reasons to take a closer look.)

- Is this an especially emotion-laded post? Is the level of emotion appropriate for the situation?

- Does the post appear to weaponize" emotion or tell readers how to feel about the content, such as by using more anger and swear words? (Keep in mind that bots on social media can and do use profanity).

- What might someone have to gain by touching on your emotions in this way? What's the worst-case scenario if this turns out to not be true? What might a user (using AI) be hoping you don't look up?

Gone are the days where every AI image looked like a wacky Pixar knockoff, but it's still worth checking for these known cues:

- Hands and teeth with too many fingers, too many hands, too many teeth or impossibly long arms

- Hyper-realistic images or those that look like paintings

- Texture issues in the background, midground and around the corners and edges of an image

- Unnatural smoothness, especially on faces, clothes or hair

- Shadows and light coming from the wrong place, or with only certain elements casting shadows

- Abrupt transitions, either in an image or a video

Tools like TrueMedia.org scan social posts for false faces, voices, and information, while Mozilla's Deepfake Detector analyzes text using multiple detection engines and generates a confidence score indicating the likelihood that the content is AI-generated. But while AI detection accuracy is improving, it isn't perfect.

It always helps to try to verify the information itself - search for it along with fact check" and look for trusted sources. For images, try a reverse image search using Google Image Search or Bing Image Match.

What can misinformation look like on TikTok?Every social media site has its own AI landscape. Fake news, images and news clips targeting young voters and consumers are circulated particularly widely on TikTok due to its young user base. Content farms" spin out inaccurate or misleading videos, often multiple a day in the distinctive TikTok style of on-screen text read by an AI voice.

When scrolling on TikTok, be skeptical - or at least get a second opinion - on any informational videos that aren't read by real people or only consist of captions to the AI voice (reputable news sites usually show who's talking to build trust). Profiles that look like news sites but that have no comments or likes (especially for celebrity news) are a red flag - as well as canned phrases like creepy response" or finally broke their silence" meant to drive clicks.

What can misinformation look like on X?Though many AI-generated posts on X are largely innocuous text-based posts, videos in particular are more likely to be political deepfakes. And while the crowdsourced moderation feature, Community Notes," allows users to annotate posts with context or warnings, it replaced a more robust monitoring operation that means it's more likely users will encounter bots.

Stay wary of accounts that only spam replies, or situations where multiple accounts are commenting similar things under a post. If a user only posts replies, especially to inflammatory content, it's a red flag that it's a bot searching for certain keywords.

Also, user verification on X is the least trustworthy of the major social media sites as users can pay for verified" status (in one Cambridge study, half of synthetic profiles studied had verified status).

What can misinformation look like on Facebook?It's especially difficult to silo yourself from AI-generated content on Facebook, even if you're only interested in posts from family and friends. Over the past three years, there has been a significant increase" in the rate of users seeing posts they held no friend" connection to, thanks to the algorithm that surfaces high-engagement posts in users' feeds.

Being disciplined about clicking not interested" under the three dots on each post can help stem the flow, as well as staying skeptical of images and being wary of link-outs to news" sites. Verify posts (even those that appear to be from a harmless, real person) about any news events independently.

Misleading posts on Facebook are also especially focused on trying to get users off Facebook - directing them off the platform to content farms, fake stores and other scam sites.

Stay alert and think critically onlineHumans often overestimate how good they are at detecting AI - nice art is sometimes AI-generated, and terrible grammar is sometimes very human. It's not easy to navigate a landscape designed to trick you, but your best call is to improve how you critically consume all information. Stay curious. After all, AI gets better every passing day - right down to drawing those tricky hands.

Sarah Skinner is a senior editor at a NYC tech startup. She holds a degree from Cornell University on AI and empathy, and has previously worked for McKinsey & Company and the Associated Press.

The post Misinformation in the age of AI: It's in the details (like extra fingers) appeared first on The Mozilla Blog.