Researchers trick Tesla Autopilot into steering into oncoming traffic

Enlarge (credit: Keen Security Lab)

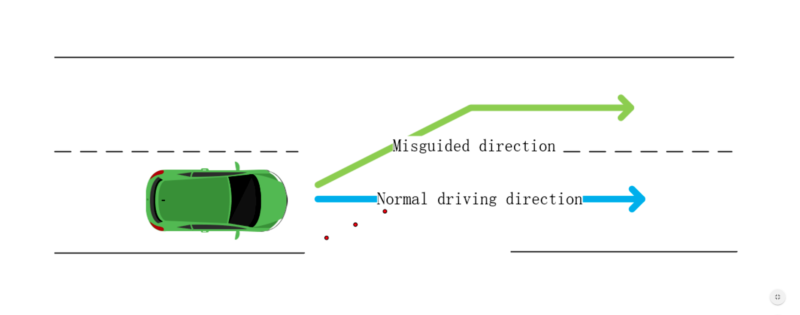

Researchers have devised a simple attack that might cause a Tesla to automatically steer into oncoming traffic under certain conditions. The proof-of-concept exploit works not by hacking into the car's onboard computing system, but by using small, inconspicuous stickers that trick the Enhanced Autopilot of a Model S 75 into detecting and then following a change in the current lane.

Tesla's Enhanced Autopilot supports a variety of capabilities, including lane-centering, self-parking, and the ability to automatically change lanes with the driver's confirmation. The feature is now mostly called "Autopilot" after Tesla reshuffled the Autopilot price structure. It primarily relies on cameras, ultrasonic sensors, and radar to gather information about its surroundings, including nearby obstacles, terrain, and lane changes. It then feeds the data into onboard computers that use machine learning to make judgements in real time about the best way to respond.

Researchers from Tencent's Keen Security Lab recently reverse-engineered several of Tesla's automated processes to see how they reacted when environmental variables changed. One of the most striking discoveries was a way to cause Autopilot to steer into oncoming traffic. The attack worked by carefully affixing three stickers to the road. The stickers were nearly invisible to drivers, but machine-learning algorithms used by the Autopilot detected them as a line that indicated the lane was shifting to the left. As a result, Autopilot steered in that direction.

Read 8 remaining paragraphs | Comments