Google is claiming that updates to apps

in the Android Play Store may soon become much smaller due to their use of a new algorithm named

"Courgette" developed from bsdiff. The algorithm can reduce the size of patches by up to 50% according to Google, and they have previously been using it for updates to the desktop Chrome browser.

Previously when an app needed to be updated the entire app would have to be downloaded and installed, essentially replacing the existing version. Google has been using diff versioning since 2012, but this new algorithm takes advantage of the ways in which compiled native code changes between versions. This is most effective when libraries are stored uncompressed, but even compressed code can still potentially see a 5% decrease in data usage. Although a 5% savings isn't a dramatic change, every little bit helps, especially with limited data plans.

In the latest in the MyGov government portal catastrophe, the Australian government is following in the footsteps of the UK an US governments by throwing

484 million dollars at IBM to somehow fix the problems with the ailing portal for which tens of thousands of Australians have complained about. The key problems reported by users have to do with system availability, stability and lack of clear usability for key activities. It has been preventing users from being paid their due government payments for months, some users have reported. Will IBM be able to do what contractors such as Accenture and HP have failed to do in other countries? Can the MyGov be fixed at all, or will it need to be replaced? How does the recent joint venture between ATO, DHS and the new DTO agency affect IBM joining the party? Will agile save the day or bury this problem deeper? Australian citizens desperate to see positive benefit for the millions spent to integrate Medicare and Centrelink to form one agency which promised to reduce costs and deliver more are hanging on to the edge of their seats waiting to see what happens next in the MyGov saga.

Mozilla is relaunching its new

Thimble online code editor, an upgraded tool for teaching and learning web literacy in a simple, hands-on and visual fashion. Thimble is an online code editor that makes it easy to create and publish your own web pages while learning HTML, CSS & JavaScript. Thimble lets users to write and edit HTML, CSS, and JavaScript on the left side of their screen, and watch their code come to life on the right. Learners can start with simple remixes, changing words and images on a page to familiarize themselves with code and build confidence. Learners can also work their way toward creating entire web pages from scratch. Thimble is free and open source.

Mozilla has been rethinking its add-on architecture for browser extensions, and has just made an announcement that may have profound implications for developers and browser users everywhere:

"Mozilla today announced major changes to how Firefox will implement add-ons going forward. The most important of these is the adoption of a new extension API that will be largely compatible with the one currently in use by Blink-based browsers like Chrome and Opera. This so-called WebExtensions API will ensure that developers will only have to make a few small changes to their code for their add-on to run on Firefox.http://techcrunch.com/2015/08/21/chrome-extensions-are-coming-to-firefox/http://www.thetimesgazette.com/mozilla-on-track-to-modernize-firefox-add-on-systems-and-extension-leaves-developers-unhappy/6502/https://wiki.mozilla.org/WebExtensions"We would like add-on development to be more like Web development: the same code should run in multiple browsers according to behavior set by standards, with comprehensive documentation available from multiple vendors,"Mozilla's Kev Needham writes in today's announcement. "Not everyone is happy about it. The developer of the popular

DownThemAll browser extension has proclaimed this move to be the end of his extension, and potentially many others. He says,

Gone with DownThemAll! will be add-ons that e.g. let you change major bits about the Firefox user interface (e.g. tabs tree add-ons), add-ons that allow you to do more "advanced" stuff than just showing or slightly altering websites, such as e.g. restarting the browser upon click (unless mozilla kindly provides an API for that, which won't be compatible with Chrome, of course). Add-ons like NoScript will be severely limited in their feature set as well. Say byebye to Greasemonkey and hello to Tampermonkey, with it's limitations. Want that add-on that lets you change the new tab page for something else or enhances that page? Maybe it will be available, maybe not, depending on if and when mozilla kindly provides WebExtensions APIs for such things. And of course, depending on if there will be an author creating this entirely new add-on from scratch.

What this also means: Almost all your existing add-ons will be broken, entirely, save for some Add-on SDK add-ons, namely those that don't do anything fancy. Sure, even today, lots of add-ons break, and some add-ons will not get updated when they do and there are no suitable replacements. However, with this change, almost every add-on will be completely broken and in need of major updating by the extension authors. Good luck with that.

As of today, the

press statement is out for the release of Oracle's VirtualBox 5.0. This comes 4-6 months after

an inquiry started about whether Oracle had abandoned further development of VirtualBox following 2013's v4.3. Infoworld's

April 2, 2015 review of the VB 5.0 beta states "don't expect anything truly revolutionary... but its main advantage over VMware remains with its offer of a free incarnation of many of the same core features."

Highlights for the new release include support for USB 3.0 Devices and Disk Image Encryption, among other things.

In the software world, it's long been the practice that you don't purchase software, you purchase a license to use it. But as software increasingly gets woven into other products - like the many chips and circuits that run your modern automobile - this practice starts to chip away at the traditional sense of ownership of physical goods.

In a particularly spectacular display of corporate delusion, John Deere-the world's largest agricultural machinery maker -told the Copyright Office that farmers don't own their tractors. Because computer code snakes through the DNA of modern tractors, farmers receive "an implied license for the life of the vehicle to operate the vehicle."

It's John Deere's tractor, folks. You're just driving it.

Several manufacturers recently submitted similar comments to the Copyright Office under an inquiry into the Digital Millennium Copyright Act. DMCA is a vast 1998 copyright law that (among other things) governs the blurry line between software and hardware. The Copyright Office, after reading the comments and holding a hearing, will decide in July which high-tech devices we can modify, hack, and repair-and decide whether John Deere's twisted vision of ownership will become a reality.

It's a conversation with profound implications for the future.

Check out the rest at Wired.

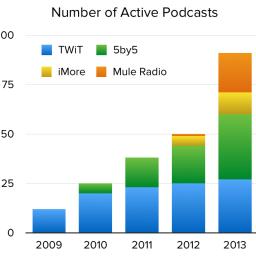

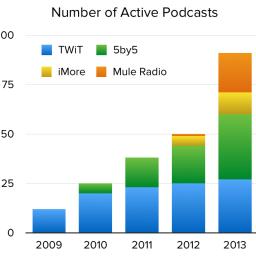

There have been lots of apps developed and released that facilitate listening to podcasts. Amazingly though,

the software used to create podcasts hasn't evolved much over the years.

Many podcasters have spent the last week sharing how they record their shows, and while the hardware is fun to argue about, the software story leaves a lot to be desired. Casey Liss describes his software setup for recording ATP: Skype, Piezo, Skype Call Recorder, and Google Docs. Jason Snell recommends getting a microphone with a heapdhone jack to compensate for Skype's lack of local input monitoring. If you can stomach it, you can listen to Dan Benjamin describe the ridiculous lengths he's gone to to record multiple guests in real time, and he still ends up dealing with Skype artifacts. This is a professional podcaster with a half-dozen Macs dedicated to the task of getting reasonable audio from remote guests. Ugh.

Allen Pike has some interesting thoughts on the subject, as well as a

previous article detailing the "fall and rise of podcasting". As an avid podcast listener, I agree the field is ripe for improvement. What do you think? And which podcasts do you enjoy listening to?

More than 3 years ago, January 2011,

ffmpeg was

forked by a part of the development team into

libav. Then, by the end of that year, the fork had replaced FFmpeg in Debian's packages, with, notably, the binary in the ffmpeg package marking itself as deprecated and recommending users to use avconv instead. As the split didn't happen in the most friendly way (to say the least), these events sparkled a lot of debates and flames and it is quite difficult to find articles on the topic that are not biased one way or the other.

In November 2013, a

bug report was filed for Debian to

reintroduce an actual ffmpeg package and all the associated libraries. Fast forward to mid-September 2014, after some technical discussions and soname changes (all ffmpeg-related libraries with a

libav* name have been renamed into

libav*-ffmpeg),

ffmpeg has been quietly reintroduced in Debian unstable and it might even be

just in time to be included for release in Jessie.

Let's hope this solution where both versions can co-exist will help calm things down.

The worm and/or vulnerability they're now calling "Shellshock" has soured sysadmins on the Bash shell for the moment, and brought attention to a new point of entry for web-based server penetration attacks. Fortunately some researchers at Harvard have been thinking about problems like this and have come up with a solution.

It's a new scripting language called "Shill" and it's intended to limit the resources and privileges scripts have when running.

The language, called Shill, was designed to limit shell-based scripts so they can't access resources beyond what is specifically needed for the task at hand. "You want to give the script exactly the permissions it needs to get its job done," said Scott Moore, a computer science doctoral student at Harvard who is one of the contributors to the Shill research project, led by Stephen Chong, an associate professor of computer science.

The team is working on a version of Shill for the FreeBSD Unix operating system and is mulling the idea of porting it to Linux. The team will also present the technology next week at the USENIX Symposium on Operating Systems Design and Implementation conference, in Broomfield, Colorado. Shill follows the principle of least privilege, which stipulates that software shouldn't posses more authority than what it needs to complete its job, Moore said.

Sounds like this might be useful for more reasons than simple exploit prevention, too!

In 2005, work began on a project headed by Andrei Zmievski to bring native Unicode support to the language by embedding the International Components for Unicode (ICU) library and internally representing strings as UTF-16. Because this project would lead to major internal and user-affecting changes, it was planned to be the next major PHP version (i.e. version 6) along with a few other features.

By using UTF-16 as default encoding, developers would need to convert the code and all input (e.g. data from requests, database, etc.) from one encoding to UTF-16 and back again. This conversion takes a lot of CPU time, memory (to store the much larger strings), and creates a higher complexity in the implementation due to the increased need to detect the proper encoding for the situation. In light of all of this and the relatively small gain, many contributors became unwilling to use "trunk" as their main development branch and instead either using the stable 5.2/5.3 branches or refusing to do development at all. This shortage of developers led to delays in the project.

In 2009, PHP 5.3 release with many non-Unicode features back-ported from PHP6, most notably namespaces. This became the widely used, stable version of PHP, and in March 2010, the PHP6 project was officially abandoned, and instead PHP 5.4 was prepared containing most remaining non-Unicode features from PHP 6, such as traits and closure re-binding.

Why Jump to PHP7?

After a vote in July of 2014, it was officially decided that the next major release would be called PHP7. The primary reason for even considering the name is the widely-known existence of the previous failed attempt of a new major release, and the existence of numerous books and other resources which already referred to the previous PHP 6. To address potential confusion, there was an RFC (i.e. request for comments) and a vote on whether or not to reuse this name.

Read the rest at the Halls of Valhalla.